Predicting total healthcare demand using machine learning: separate and combined analysis of predisposing, enabling, and need factors | BMC Health Services Research

Data sources and sample

The 2022 Turkey Health Survey, conducted by the Turkish Statistical Institute (TÜİK), is a comprehensive study evaluating the use of healthcare services, health behaviors, and health perceptions in Turkey. This survey encompasses a wide range of health indicators, including access to healthcare services, health statuses, the prevalence of chronic diseases, nutritional habits, and levels of physical activity across the country. The 2022 Turkey Health Survey was carried out through questionnaires administered to selected sample groups nationwide. It analyzes the health statuses, access to healthcare services, and health-related behaviors of individuals aged 15 and over.

The findings of this survey are critically important for the formulation of health policies and the planning of healthcare services. This research provides significant insights into the demand for and needs related to healthcare services in Turkey. It offers valuable information to policymakers, particularly regarding inequalities in access to healthcare, health behaviors of the population, and health perceptionsFootnote 1.

Outcome variable

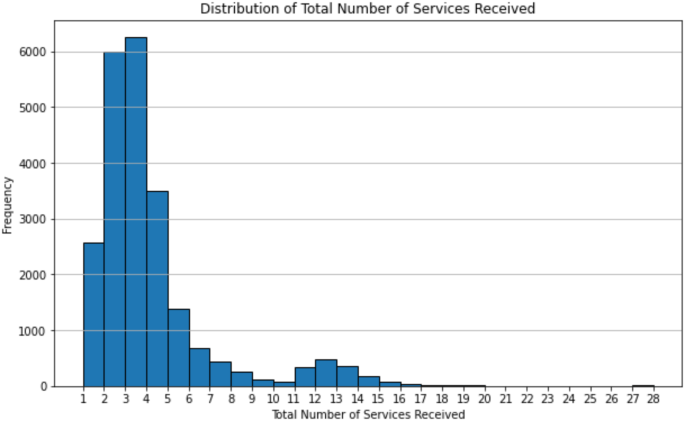

The outcome variable is defined as the “Total Number of Healthcare Services Utilized”. This variable is not directly available in the TSA 2022 health survey. Instead, it is a composite variable calculated from various types of services received, as explained below. The TSA 2022 health survey did not include a variable specifically named “Total Number of Services Received.” This variable was created to represent the number of healthcare services participants received in the past year. It was calculated based on multiple questions regarding different types of services received by participants. These questions included whether participants received inpatient treatment, outpatient day services, dental services, and other types of healthcare services, totaling twelve different service categories. Each of these questions was coded as “1” if the service was received and “0” if it was not. The calculation involved summing these responses. For instance, if a participant received inpatient services, it was coded as 1, and if not, it was coded as 0. An additional column was added to the dataset to calculate the total number of services received by summing these 12 columns.

The twelve variables used in the study are named as follows: Inpatient Services in the Last 12 Months, Outpatient Day Services in the Last 12 Months, Dental Services in the Last 12 Months, Family Doctor Services in the Last 4 Weeks, Specialist Doctor Services in the Last 12 Months, Physical Therapist Services in the Last 12 Months, Physiotherapy Specialist Services in the Last 12 Months, Kinesiotherapist Services in the Last 12 Months, Psychologist Services in the Last 12 Months, Psychotherapist Services in the Last 12 Months, Psychiatrist Services in the Last 12 Months, and Home Care Services in the Last 12 Months.

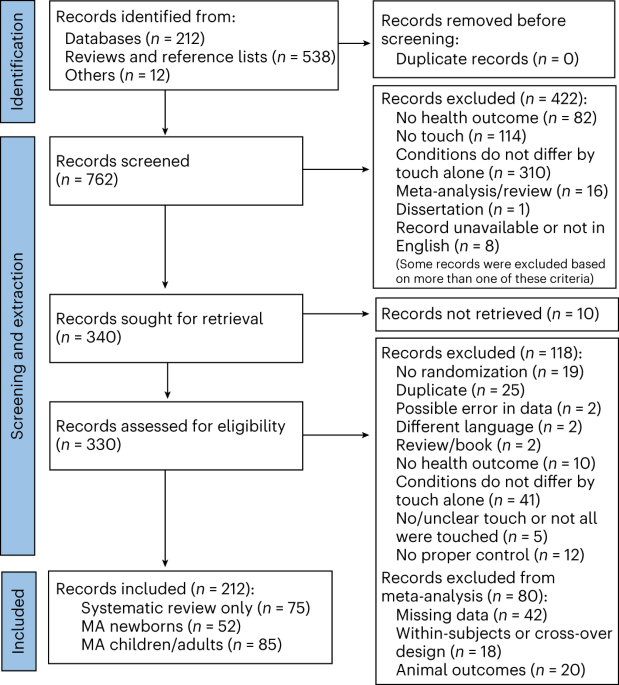

Target variable: total number of services received

Figure 1 illustrates the distribution of the total number of healthcare services utilized within the dataset. The X-axis represents the total number of services received, while the Y-axis represents the frequency of these occurrences. Upon examining the histogram, it is evident that the majority of individuals have relatively low service utilization, with a larger proportion receiving services 2, 3, or 4 times. Most service counts are concentrated on the left side of the histogram, indicating that a significant portion of the population utilizes a low number of services. As the number of services increases, the frequency decreases, suggesting that higher service utilization is less common, and the majority of counts are clustered at lower values. The histogram displays a right-skewed distribution, as evidenced by the tail extending to the right. This skewness indicates that a small number of individuals have significantly higher service utilization, which can be considered outliers. Overall, the histogram reveals that while most individuals utilize a low number of healthcare services, a few individuals have much higher utilization, contributing to the overall right-skewed distribution.

Predictors and feature selection

Within the framework of the Andersen model, the variables classified as “Predisposing Factors” encompass fundamental demographic and socioeconomic characteristics that influence individuals’ access to and utilization of healthcare services. These factors indirectly determine the need for healthcare services, as they shape individuals’ access to and propensity to utilize these services.

-

Gender differences are evident in healthcare utilization, with various health conditions and access to healthcare services being influenced by gender-specific disparities. For instance, women typically utilize health check-ups and preventive healthcare services more frequently than men.

-

Age is a significant factor directly affecting the need for healthcare services. As age increases, the prevalence of chronic diseases rises, thereby necessitating greater healthcare services.

-

The place of birth can present variations in accessible healthcare services and health habits. Rural or urban birthplaces have different dynamics in terms of access to and utilization of healthcare services.

-

Education level influences the capacity to benefit from healthcare services and the level of health-related knowledge. Higher education levels are generally associated with better access to healthcare services and a better understanding of health status.

-

Married individuals often have a more stable support network compared to those living alone. This is particularly important in the management of chronic diseases and during old age.

-

An individual’s employment status can be a determinant of access to health insurance, income level, and consequently, access to healthcare services.

-

The nature of employment, such as full-time, part-time, or self-employment, can affect access to health insurance and workplace health services.

Each of these variables plays a critical role in understanding individuals’ access to and utilization of healthcare services within the framework of Andersen’s model. Classifying individuals based on these variables provides essential insights for more effective planning and implementation of healthcare services.

In Andersen’s model, the category of “Enabling Factors” includes the conditions and resources that determine individuals’ physical and economic access to healthcare services. These factors can directly impact access to healthcare, either facilitating or hindering it. Below are explanations of why each of these variables is considered an enabling factor in this context:

-

Government-provided health insurance covers a significant portion of treatment costs, thereby reducing out-of-pocket expenses for individuals and facilitating access to healthcare services.

-

Private health insurance typically offers more comprehensive and faster services, making it easier to access healthcare. Insured individuals can benefit from prioritized treatment and better service options.

-

Personal financial resources can be a determining factor in accessing healthcare services, especially when treatments are not covered by insurance.

-

A stable job and income enable individuals to maintain benefits such as health insurance and access healthcare services regularly.

-

Income level is a critical factor, particularly for accessing expensive treatments. Households with higher income levels can access healthcare services more easily.

-

Delays in appointment processes can hinder access to healthcare services, especially for non-emergency situations.

-

Difficulty or time-consuming travel to healthcare facilities can be a barrier, particularly for those living in rural or remote areas.

-

Economic hardships, especially regarding expensive medical procedures, can hinder access to healthcare services.

-

Dental health services are typically expensive, and costs can be a significant barrier to access.

-

Prescription medications can be costly, posing a continuous barrier to access, particularly for individuals with chronic illnesses.

-

Mental health services can be particularly costly without adequate insurance coverage, restricting access to such services.

-

The presence of reliable close relatives who can provide support during crises or health issues can facilitate access to healthcare services.

-

Support from the community and surrounding environment can ease access to healthcare services, especially for the elderly and disabled individuals.

-

Assistance from neighbors or the community can expedite access to healthcare services, particularly in emergency situations.

These variables represent the various ways and conditions that affect individuals’ access to healthcare services, and therefore hold significant importance in the Andersen model.

In the Andersen model, “Need Factors” are variables that reflect individuals’ objective and subjective needs for healthcare services. These factors indicate individuals’ current health status and urgent needs for healthcare services. The variables listed in the provided table pertain to various chronic diseases and health conditions, directly impacting individuals’ needs for access to healthcare services. Here is an explanation of each of these variables:

-

Chronic Diseases (Various Chronic Conditions): Asthma, Chronic Bronchitis, Myocardial Infarction (Heart Attack), Coronary Heart Diseases, Hypertension, Stroke, Arthritis (Joint Diseases), Lower Back and Neck Issues, Diabetes (Sugar Disease), Allergies, Liver Failure, Incontinence, Kidney Diseases, Depression, High Blood Lipids, Alzheimer’s Disease, Celiac Disease, Substance Abuse, Down Syndrome, Autism, and Other Chronic Diseases: These chronic conditions necessitate individuals to require continuous healthcare services. Chronic diseases typically require long-term care, regular monitoring, and treatment.

-

Physical and Psychological Conditions: Bodily Pain, Depression, Restlessness: These physical and psychological conditions can influence the frequency and type of healthcare services individuals seek. For instance, a person experiencing constant bodily pain may visit the doctor more frequently.

-

Anthropometric Measurements: Height and Weight (or Body Mass Index, which considers both): These measurements reflect individuals’ overall health status and potential health risks. For example, being overweight or underweight can be a risk factor for specific health issues.

-

Lifestyle-Related Factors: Smoking and Alcohol Consumption: These lifestyle factors significantly impact individuals’ health and can contribute to the development of chronic diseases.

Need factors are among the most critical determinants of the frequency and type of healthcare services individuals utilize. Identifying these variables aids healthcare providers in planning and implementing interventions tailored to individuals, and is also crucial for targeting and developing public health programs.

Additionally, a combined dataset (50 variables) was created by integrating these three factors, and the impact of all variables together on the total number of services received was analyzed. This study comprehensively examines the demographic and background characteristics of 22,742 participants. The research aims to analyze the distribution of variables such as gender, marital status, place of birth, age group, educational level, employment status, and body mass index (BMI) among the participants.

Since none of the variables in our dataset contain missing values, techniques for handling missing data were not employed in this study. Typically, methods such as imputation, deletion, or interpolation are applied when datasets exhibit incomplete observations. However, given the completeness of our dataset, the implementation of such techniques was deemed unnecessary. The absence of missing data enhances the robustness of our analysis, ensuring that the results are not influenced by potential biases introduced through data imputation or other corrective measures. Consequently, the findings presented in this study are derived from a dataset that remains intact, preserving the integrity and reliability of the statistical analyses conducted.

In the study, the demographic and socioeconomic characteristics of the participants are presented in Table 1. The table illustrates the distribution of various attributes, including gender, marital status, birth place, age groups, education level, employment status, and BMI group. Specifically, the gender distribution shows that 48.2% of the participants are male and 51.8% are female. The majority of the participants are married (66.2%), and 97.7% were born in Turkey. The age distribution is as follows: 7.4% are in the 0–18 age group, 27.4% are in the 19–34 age group, 29.2% are in the 35–49 age group, 22.2% are in the 50–64 age group, and 13.8% are 65 years and older. Regarding education, 10.4% have no formal education, 47.1% completed primary school, 22.3% completed high school, 18.3% have a university or college degree, and 2.0% have a master’s or PhD degree. Employment status indicates that 39.9% are employed, 45.5% are unemployed, and 14.6% are retired. Lastly, the BMI group shows that 0.3% are severely underweight, 3.1% are underweight, 39.2% are normal weight, 36.6% are overweight, 15.6% are obese (Class 1 and 2), and 5.2% are severely obese.

Statistical analysis, processing of missing values and synthetic minority oversampling technique

For this analysis, Python version 3.9.7 was utilized along with several essential libraries, including pandas, matplotlib, numpy, and scikit-learn (sklearn). These libraries provided robust tools for data manipulation, visualization, numerical operations, and machine learning algorithm implementation, respectively.

A thorough examination of the dataset was conducted to address missing values, a critical step to ensure the integrity and performance of the machine learning models. Depending on the nature and context of the data, different strategies were applied to handle these missing values. For columns with sparse missing values, imputation techniques were employed. Numerical columns had missing values filled using methods such as the mean, median, or mode, while categorical variables were handled by filling with the most frequent category or a new category labeled ‘Missing’. In cases where a column had a significant proportion of missing values that could not be reliably imputed, or if the column was deemed non-essential, rows or columns with missing values were removed to maintain consistency and avoid introducing biases.

To prepare the dataset for machine learning algorithms, several preprocessing steps were undertaken, particularly focusing on converting categorical variables into a format suitable for modeling. One of the key techniques used was one-hot encoding, facilitated by the `get_dummies` function from the pandas library. This process converted categorical data into a binary format where each category was represented by a separate column with values of 0 or 1, essential for algorithms that require numerical input. Additionally, although not explicitly mentioned, normalization or standardization of numerical features was considered beneficial, especially for models involving gradient descent optimization, ensuring that all features contributed equally to the model’s learning process.

The preprocessing steps, including the careful handling of missing values and transformation of categorical variables, were integral to preparing the dataset for machine learning analysis. By leveraging Python 3.9.7 and powerful libraries like pandas, matplotlib, numpy, and sklearn, the data was meticulously cleaned, transformed, and made ready for robust and accurate modeling, ensuring the best possible outcomes from the machine learning algorithms applied.

Machine learning methods

In our study, we applied seven machine learning methods: logistic regression (LR), k-nearest neighbors (KNN), support vector machine (SVM), random forest (RF), decision tree (DT), and extreme gradient boosting (XGBoost). The selection of these techniques was guided by the studies of Feretzakis et al. [13, 25, 26], which served as reference points for determining the most appropriate models for our analysis. By leveraging these established methodologies, our study ensures methodological rigor and alignment with contemporary research trends in machine learning applications within the healthcare domain.

These methods were utilized to identify the factors affecting the total number of services received by individuals in Turkey and to determine the most significant variables among them.

Consequently, we compared the ability of the seven models to identify the factors influencing the total number of services received by individuals and to determine the most significant variables. The performance of these models provided valuable insights into predicting the overall demand of individuals. Each of these models offered various advantages depending on the data characteristics and structure, contributing significantly to the prediction processes.

Logistic Regression (LR)

Logistic Regression (LR) is a statistical technique used to predict the probability of a target variable belonging to one of two possible groups; for example, “yes” or “no,” “sick” or “healthy.” Mathematically, logistic regression estimates the probability P(N = 1), i.e., the probability of the target variable being 1, as a function of the independent variables M. The theory behind logistic regression involves using a logistic (sigmoid) function to constrain the predicted probabilities between 0 and 1. This function transforms any real number into the [0–1] range [27, 28]. The mathematical formula for the logistic function is as follows:

$$\:\sigma\:\left(z\right)=1/1+{e}^{-z}$$

In this formula, z represents the weighted sum of the independent variables. In machine learning, predictions are made using this function, and the output values of the model are probabilities ranging from 0 to 1, obtained by applying the sigmoid function. The advantage of logistic regression is that the predicted probabilities are directly interpretable. Additionally, this method is highly effective and widely used when the target variable is binary [29].

K-Nearest Neighbors (KNN)

K-Nearest Neighbors (K-NN) is a widely used supervised learning technique in data mining and machine learning. This classification method involves a learning process based on the “similarity” information between data points. K-NN is used in both classification and regression techniques [30, 31]. In both techniques, the K nearest training examples in the dataset are used as input. The outcome is determined based on whether K-NN is applied for classification or regression. K-NN has shown to produce better results in various fields [32].

K-NN also has significant applications in the healthcare sector. For instance, a study using demographic and health survey data from India employed the K-NN algorithm for the detection and classification of diabetes. This study demonstrated that K-NN can make accurate classifications in health data [29].

Support Vector Machine (SVM)

It is a powerful classification algorithm that works by creating a hyperplane that maximizes the separation between two classes. SVM is effective even in high-dimensional datasets and aims to maximize the marginal separation. This algorithm is widely used in classification problems and has significant applications in health surveys [29, 31].

Support Vector Classifier is a definitive classifier that distinguishes between two classes by drawing a separating plane. The linear SVC draws a line between two classes, classifying all data points on one side of the line as one class and those on the other side as the second class. This line is chosen not only to separate the two classes but also to stay as far away as possible from the nearest points of both classes [33].

The various applications of SVM in health surveys demonstrate the algorithm’s flexibility and accuracy. For instance, in a study conducted to predict the nutritional status of pregnant women in Bangladesh, SVM was used and found to achieve high accuracy rates in determining nutritional status [34]. Additionally, another study predicting under-vaccination status among children under five in East Africa also used SVM, yielding successful results [35]. Furthermore, research conducted to predict depression among secondhand smokers in Korea found that SVM could accurately predict depression risk by analyzing various demographic and health data [36].

Random Forest (RF)

Random Forest (RF) is an ensemble learning technique that combines the predictions of multiple decision trees. In this method, each decision tree is independently trained using randomly selected data subsets, and the overall performance of the model is determined by the majority vote or the average of these trees’ predictions. RF is effectively used for both classification and regression problems, enhancing the overall model performance [29]. It creates a series of decision trees during training and outputs the class mode or average prediction of individual trees, addressing classification, regression, and other tasks. Random Forest corrects the tendency of decision trees to overfit training sets [37].

RF adds predictive power to the model by developing trees that consider the best feature from a randomly chosen subset of features, rather than searching for the best feature while splitting each node. This approach typically results in a model that performs better and generalizes more effectively. Consequently, the risk of overfitting is reduced, and the generalization capability is increased [33].

Decision Tree (DT)

A Decision Tree (DT) is a modeling technique that branches out into a chain of decisions and outcomes, representing the data set. In this method, each node performs a test on a feature; the branches represent the results of the test, while the leaves show the predicted outcomes. Decision trees are widely used in classification and regression problems and have the advantage of easy visualization. They also use various measures such as the Gini index or information gain to ensure the separation of features and classes in the data set [38, 39].

When examining the recent literature on the applications of decision trees in the healthcare field, it is evident that this method has been effectively used in various studies. For instance, Kalayou et al. [40] investigated the use of machine learning methods with demographic and health survey data to predict acute respiratory infections in Ethiopian children under the age of five. This study demonstrated that decision trees are an effective tool for predicting disease risks based on various demographic characteristics. Decision trees provide high explainability in such health data and offer ease in visualizing the relationships between features.

Similarly, Zemariam et al. [41] used supervised machine learning algorithms to classify and predict anemia among young girls in Ethiopia. In this research, decision trees played a crucial role in understanding and classifying the key factors determining anemia status. The study emphasized the need to consider performance metrics such as sensitivity and specificity, especially in cases of data imbalance.

Another study by Alie and Negesse [42] applied machine learning methods to predict HIV testing services among adolescents in Ethiopia. In this research, decision trees were effectively used to analyze the impact of different socioeconomic and demographic factors. It was observed that decision trees provide valuable insights for policymakers by visualizing these complex relationships.

Finally, Kim et al. [43] developed a machine learning model using multi-faceted lifestyle data to predict the quality of life of middle-aged adults in South Korea. In this study, decision trees were effectively used to identify and visualize the interactions between multiple factors affecting quality of life. Such models provide transparency and comprehensibility in decision-making processes and play a crucial role in the development of health policies.

In conclusion, decision trees stand out as an effective tool in various health-related research, especially in identifying risk factors, predicting diseases, and planning health services. The ease of interpretation and visualization offered by this method provides significant advantages in analyzing complex health data and in the policy-making processes.

XGBoost

XGBoost (Extreme Gradient Boosting) is an ensemble learning model developed using the gradient boosting algorithm, known for its performance and speed. It delivers high accuracy rates, especially on large datasets, and includes regularization to reduce overfitting issues [44, 45]. XGBoost was developed by Chen and Guestrin in 2016 [46], based on the boosting algorithm. This method typically combines many weak learners, often decision trees, to create a more robust and accurate model.

XGBoost employs a method for calculating the importance scores of features to optimize tree structures. When creating the tree structure, features are arranged according to their importance; less important features are placed at lower levels, while more important ones are at higher levels. This arrangement contributes to a general improvement by ensuring that the trees branch out less and become more detailed. XGBoost is capable of delivering quick and accurate results even on large datasets.

XGBoost is an application of gradient-boosted decision trees designed for speed and performance. It is used for supervised learning problems where there are multiple features and a target variable in the training data. Known for its efficiency and prediction accuracy, XGBoost leverages the gradient boosting framework to enhance its performance [47].

Gradient boosting

Gradient Boosting is another ensemble machine learning technique used for classification and regression tasks. It builds the model incrementally from weak learners, usually decision trees, and generalizes by optimizing an arbitrary differentiable loss function. Due to its ability to enhance model accuracy, Gradient Boosting is effective for predictive modeling tasks [48].

This method has been found effective in evaluating malnutrition risk factors and predicting malnutrition using machine learning approaches among women in Bangladesh [49]. Additionally, a Gradient Boosting model used to improve mental health predictions in refugee camps in Sri Lanka has shown increased accuracy in predictions in this area [50]. Yin, Cao, and Sun [51] utilized this technique to examine the non-linear relationships between population density and waist-to-hip ratio, demonstrating the effectiveness of Gradient Boosting decision trees in modeling such complex relationships.

Model assessment

The performance of machine learning models was evaluated using key metrics to identify factors affecting healthcare service demand and determine the most influential variables. Seven models were compared, each offering valuable insights based on different data characteristics. These metrics are essential for assessing model accuracy and reliability.

Recall (Sensitivity) measures the proportion of true positive cases correctly identified. A high recall (≥ 0.80) in our study highlights the models’ effectiveness in predicting healthcare demand. Precision reflects the proportion of true positive predictions among all positive predictions, indicating the model’s ability to minimize false positives. The F1 Score balances precision and recall, representing overall performance by accounting for false positives and false negatives. High F1 scores suggest reliable and balanced models. The Area Under the ROC Curve (AUC) assesses a model’s ability to distinguish between classes, with higher scores indicating superior performance [52].

Key metrics for ML (Table 2) provide critical insights for evaluating machine learning models in predicting healthcare service demand, emphasizing the importance of recall, precision, and AUC in ensuring model reliability and accuracy. The following table summarizes key metrics used to evaluate the performance of machine learning models in predicting healthcare service demand.

These formulas are the mathematical expressions of the fundamental metrics used to evaluate machine learning models’ performance. Recall, precision, F1 score, and ROC AUC are critical for understanding the model’s accuracy, sensitivity, and overall performance. These metrics are widely used in model evaluation and comparison processes [57].

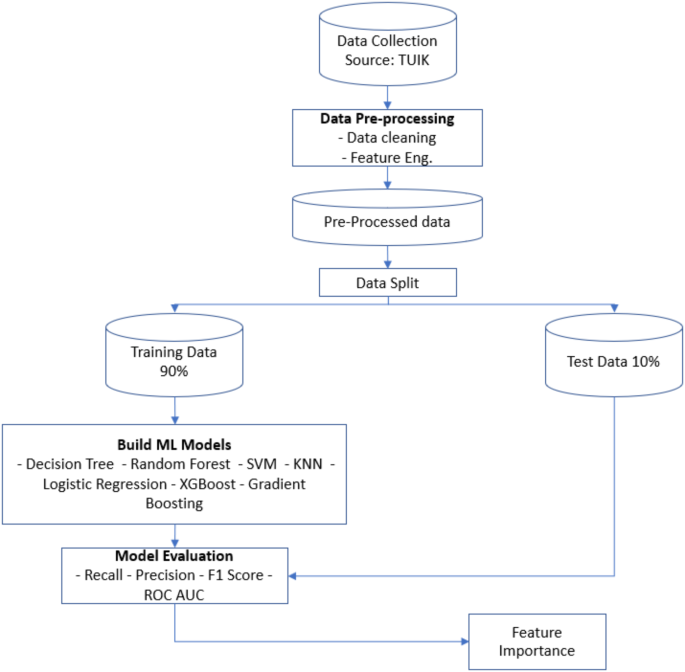

The workflow diagram (Fig. 2) provides a comprehensive approach to building and evaluating machine learning models using data from TUIK. It begins with data collection and proceeds to data pre-processing, involving cleaning and feature engineering. The pre-processed data is then split into training (90%) and testing (10%) sets. Various machine learning models, including Decision Tree, Random Forest, SVM, KNN, Logistic Regression, XGBoost, and Gradient Boosting, are trained using the training data. These models are evaluated based on Recall, Precision, F1 Score, and ROC AUC metrics to determine their effectiveness. Additionally, feature importance analysis is conducted to identify the most influential features. This systematic process ensures a thorough evaluation and selection of the best-performing model while providing insights into the key factors driving model predictions.

Analysis steps

-

Data Collection: The data underpinning this study were obtained from the 2022 Turkey Health Survey microdata provided by the Turkish Statistical Institute (TÜİK). TÜİK offers comprehensive and reliable datasets nationwide, providing valuable resources for health-related research. These data encompass various demographic, socio-economic, and health-related variables influencing healthcare demand.

-

Data Pre-processing: Following data collection, the raw data undergo several pre-processing steps. Initially, data cleaning is performed to identify and appropriately address missing values, correct outliers, and resolve inconsistencies within the dataset. Data cleaning is a crucial step to enhance the accuracy and reliability of analyses. Subsequently, feature engineering is conducted, where new features (variables) are derived from the raw data, and existing features are transformed to improve the model’s performance.

-

Data Split: After completing the pre-processing stage, the dataset is divided into training and test sets. In this study, 90% of the dataset is allocated as training data, while the remaining 10% is designated as test data. The training data are used to train machine learning models, whereas the test data are reserved for evaluating the models’ performance.

-

Balancing Data Distributions in Supervised Learning with a SMOTE Application: In this study, Synthetic Minority Over-sampling Technique (SMOTE) was applied to address class imbalance before training multiple classifiers, including Decision Tree, Random Forest, SVM, KNN, Logistic Regression, XGBoost, and Gradient Boosting. The initial dataset exhibited a significant imbalance, with Class 1 (18,725 instances) vastly outnumbering the other classes, particularly Class 3 (16 instances) and Class 0 (137 instances). This imbalance could lead to biased models that favor the majority class while underperforming on minority classes. To mitigate this issue, two different SMOTE strategies were implemented. First, targeted SMOTE was applied to Classes 0, 2, and 3, increasing their instance count to 5,000 each, ensuring better representation without unnecessarily inflating the dataset. Additionally, global SMOTE was tested, which equalized all classes to match the largest class. While both approaches improved class distribution, the impact on ROC AUC scores was limited, possibly due to the extremely low initial counts of Class 3 and Class 0. By integrating SMOTE and class weighting techniques, the models became more sensitive to minority classes, leading to improvements in recall and overall model fairness. However, the presence of highly underrepresented classes in the original dataset limited the extent of improvement. This suggests that in cases of extreme imbalance, additional techniques such as data augmentation, feature engineering, or alternative modeling approaches may be necessary for further performance enhancement.

-

Building ML Models: Various machine learning models are trained on the training data. This study employs Decision Trees, Random Forest, Support Vector Machines (SVM), K-Nearest Neighbors (KNN), Logistic Regression, XGBoost, and Gradient Boosting models. These models are trained and optimized to predict healthcare demand.

Various hyperparameters have been tested to improve the model’s performance. Ultimately, the best scores were obtained using the hyperparameters listed below, which were therefore selected for the final model configuration. These parameters are presented in Table 3.

-

Model Evaluation: The trained models are assessed using the test data, employing key performance metrics such as recall, precision, F1-score, and the area under the receiver operating characteristic curve (ROC AUC). These metrics are essential for evaluating both the predictive accuracy and the generalization capability of the models. In particular, the representation of F1-score and ROC AUC values is based on the methodology outlined in the study by Feretzakis et al. [26, 27], ensuring consistency with established research standards in machine learning-based healthcare evaluations.

-

Feature Importance Determination: Finally, the feature importance of the models is analyzed. This step identifies which factors most significantly influence healthcare demand. Determining feature importance provides critical insights for shaping health policies and optimizing resource allocation.

Feature importance is a metric used in a machine learning model to measure the contribution of independent variables (features) to the model’s prediction performance. It is a concept particularly prevalent in decision trees and ensemble models (a combination of multiple models). Feature importance values help determine which features are most critical in the model’s decision-making process. These values indicate which features the model weights more heavily, allowing analysts and data scientists to identify key features and develop more meaningful and effective models [58].

This systematic analysis process facilitates a better understanding and prediction of healthcare service demand. The findings provide significant insights for planning and developing healthcare services and policies.

link

![Average Cost of Medical School [2025]: Yearly + Total Costs Average Cost of Medical School [2025]: Yearly + Total Costs](https://educationdata.org/wp-content/uploads/2025/09/page-1.png)