Blockchain-enabled federated learning with edge analytics for secure and efficient electronic health records management

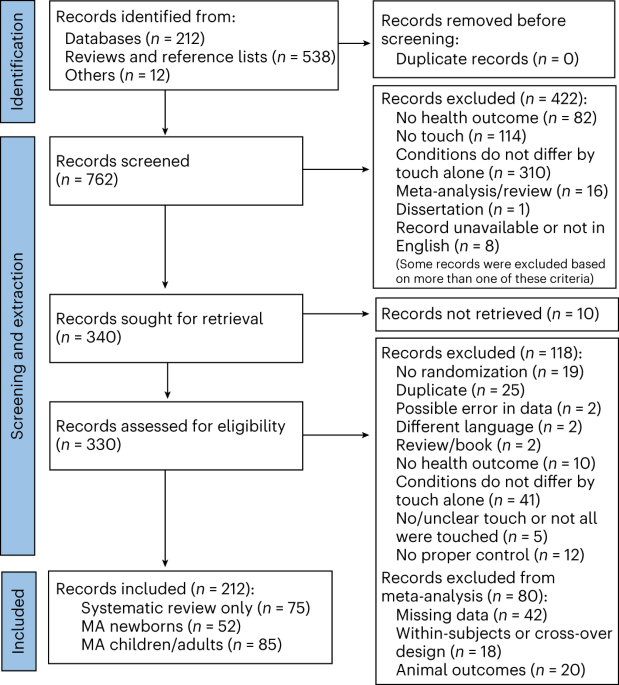

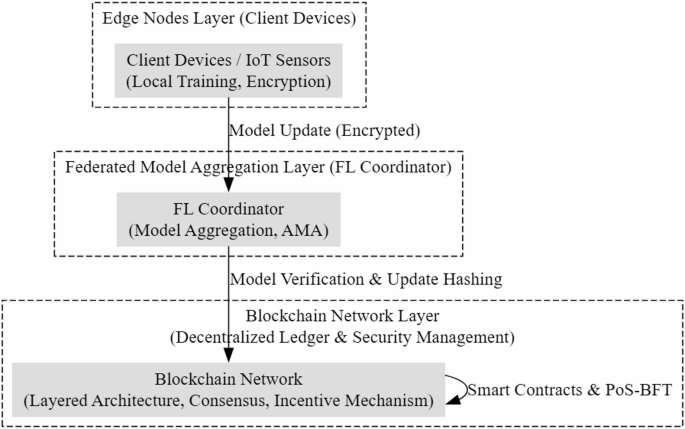

Blockchain-based Federated Learning (BCFL) offers a promising solution for decentralized, privacy-preserving machine learning across multiple institutions, especially in sensitive domains like healthcare. However, its practical adoption is limited by several critical challenges. High computational costs from energy-intensive consensus mechanisms like Proof-of-Work (PoW) make BCFL unsuitable for resource-constrained environments. Additionally, excessive communication overhead from frequent model synchronization hinders scalability in large networks. Existing BCFL models also struggle with non-IID data distributions, which are common in federated healthcare scenarios, leading to biased or suboptimal global models. Moreover, the absence of effective incentivization mechanisms reduces sustained and honest participation by decentralized nodes, undermining collaborative efforts. To address these issues, this study introduces the Efficient and Privacy-Preserving Blockchain-Enabled Federated Learning (EPP-BCFL) framework. The proposed system features a Layered Blockchain Architecture (LBA) that enhances secure and efficient communication while minimizing latency. To protect data privacy, it incorporates a Privacy-Preserving Model Aggregation (PPMA) mechanism using homomorphic encryption and differential privacy, ensuring that raw data remains local and resistant to inference attacks. A core innovation is the Adaptive Model Aggregation (AMA) module, which dynamically adjusts aggregation based on data heterogeneity, node reliability, and device capabilities, thereby improving model fairness, convergence, and resilience. The architecture of the EPP-BCFL framework consists of three primary layers:

-

1.

Edge Nodes Layer (Client Devices).

-

2.

Federated Model Aggregation Layer (FL Coordinator).

-

3.

Blockchain Network Layer (Decentralized Ledger and Security Management).

Figure 1 shows the layered blockchain architecture of the proposed EPP-BCFL framework. Each of these layers plays a crucial role in ensuring the efficiency, privacy, and scalability of the federated learning process.

Layered blockchain architecture.

The Efficient and Privacy-Preserving Blockchain-Enabled Federated Learning (EPP-BCFL) Framework is designed to address key challenges in blockchain-based federated learning (BCFL), such as high computational costs, communication delays, and privacy risks. The architecture follows a tree-like structure, dividing the system into three primary layers: Edge Nodes Layer, Federated Model Aggregation Layer (FL Coordinator), and Blockchain Network Layer. Each layer is responsible for specific tasks that contribute to the scalability, security, and efficiency of the federated learning process. At the base of the architecture, the Edge Nodes Layer (Client Devices) consists of distributed client devices that generate and process local training data. These edge nodes apply differential privacy and homomorphic encryption to ensure that sensitive data remains protected before being transmitted for aggregation. This approach mitigates risks associated with data leakage while enabling secure federated learning. Additionally, edge nodes handle data sharing and preprocessing before forwarding model updates to the next layer. The Edge Nodes Layer is represented in the architecture with two components: one focusing on privacy-preserving techniques such as differential privacy and homomorphic encryption, and another responsible for data sharing and local processing. Above the Edge Nodes Layer is the Federated Model Aggregation Layer (FL Coordinator), which acts as an intermediary between the distributed clients and the blockchain network. This layer receives encrypted model updates from edge nodes and performs adaptive model aggregation to improve learning efficiency across non-IID (non-independent and identically distributed) data. The Adaptive Model Aggregation (AMA) mechanism enhances model accuracy by addressing data heterogeneity across different client devices.

By implementing an efficient federated model aggregation strategy, this layer ensures that model updates are securely combined without exposing raw data, thereby strengthening privacy and security. The Blockchain Network Layer is positioned at the top of the architecture, ensuring tamper-proof storage, decentralized security, and efficient model update verification. This layer consists of a Layered Blockchain Architecture (LBA) that optimizes communication overhead by distributing responsibilities across different blockchain layers.

Unlike conventional blockchain-based federated learning approaches that suffer from excessive energy consumption and latency, the LBA incorporates a lightweight consensus mechanism to enhance efficiency. This ensures that transactions, such as model updates, are verified in a decentralized yet computationally feasible manner. An essential feature within the Blockchain Network Layer is the incentive mechanism, which encourages active participation in the federated learning process. In the EPP-BCFL framework, the incentive mechanism is designed to reward edge nodes based on their level of participation and the quality of their contributions. However, the current work does not specify how these incentives are calculated or distributed. Future implementations may adopt blockchain-native mechanisms such as tokenomics (e.g., distributing crypto tokens for validated model updates) or reputation systems (e.g., tracking node reliability and accuracy over time). Smart contracts can automate reward distribution and ensure fair and transparent evaluation across all federated participants. A well-defined incentive scheme is essential for practical healthcare deployments, where data-sharing entities must be compensated to maintain sustained engagement and cooperation. Since federated learning relies on voluntary contributions from edge devices, an incentive-based approach motivates participants to contribute high-quality data and model updates. Figure 2. illustrates the hierarchical flow of data and model updates from edge nodes to the FL coordinator and finally to the blockchain layer.

Overview of the proposed work.

This layered approach enhances the privacy, security, and efficiency of federated learning by ensuring that computations are distributed effectively while maintaining robust protection mechanisms. The integration of privacy-preserving techniques, adaptive aggregation, lightweight blockchain consensus, and incentive-driven participation makes EPP-BCFL an optimized solution for blockchain-based federated learning. The structured division of responsibilities among layers significantly minimizes privacy risks, computational burdens, and communication delays, making the framework suitable for large-scale deployment.

Edge nodes layer with integrated edge analytics

To enhance local intelligence and reduce central processing overhead, the EPP-BCFL framework integrates edge analytics into the Edge Nodes Layer. Edge analytics refers to the ability of edge devices to perform real-time data processing, feature extraction, and anomaly detection before participating in federated learning. Each device conducts localized statistical analysis and lightweight inference to identify data inconsistencies, abnormal patterns, or sudden drifts in distribution that could compromise training quality or signal potential adversarial activity.

This local intelligence improves model robustness by allowing devices to filter noisy or poisoned data and only forward meaningful updates. The anomaly scores are securely encrypted and transmitted alongside model updates for further validation by the blockchain network. Additionally, edge analytics facilitates vertical FL by enabling partial training across feature-siloed datasets, where different institutions may own different parts of a patient’s medical profile (e.g., hospital owns lab results, pharmacy owns prescriptions).

The computational overhead for edge analytics is minimized using efficient statistical methods (e.g., Z-score, PCA) and shallow inference models. These methods add negligible cost (O(M)) relative to deep model training, making edge analytics suitable even for resource-constrained IoT devices.

The edge nodes represent user devices or IoT sensors that collect real-time data and participate in federated learning. These nodes locally train machine learning models on their private data and send encrypted model updates to the Federated Model Aggregation Layer without sharing raw data. Each edge node i trains a local model on its private dataset \(\:D_D\), using a loss function L. The local model update follows:

$$\:D_^D=_^-\eta\:\nabla\:{L}_{i}({\theta\:}_{i}^{t},{D}_{i})$$

where,

\(\:{\theta\:}_{i}^{t}\) is the model parameter at iteration t.

\(\:\eta\:\) is the learning rate.

\(\:{L}_{i}\left({\theta\:}_{i}^{t},{D}_{i}\right)\) is the gradient computed based on the local dataset \(\:{D}_{i}\)

To preserve privacy, edge nodes use differential privacy (DP) by adding controlled noise ξ to model updates:

$$\:{\theta\:}_{i}^{t+1}={\theta\:}_{i}^{t}-\eta\:\nabla\:{L}_{i}({\theta\:}_{i}^{t},{D}_{i})+\xi\:$$

where \(\:\xi\:\sim\:N(0,{\sigma\:}^{2})\) ensures privacy by masking individual contributions.

The EPP-BCFL framework incorporates several key features that strengthen the efficiency and security of federated learning in decentralized environments. Local model training is performed independently at each edge node, where deep learning models are trained using private, device-specific data without sharing raw information. To ensure the secure transmission of model updates, homomorphic encryption is applied before any data leaves the local node, allowing computations to be performed on encrypted data while preserving confidentiality. Additionally, an adaptive training mechanism is employed, which dynamically adjusts training frequency and node participation based on the computational capacity and resource availability of each device. This ensures balanced workload distribution, energy efficiency, and sustained participation across a heterogeneous network of edge devices.

Federated model aggregation layer (FL coordinator)

This layer acts as a central entity that gathers model apprises from various edge nodes, aggregates them, and updates the global model. Unlike traditional federated learning, which depends on a centralized server, this layer leverages a distributed blockchain network for improved security and resilience. The global model update is performed by aggregating model updates from N edge devices using Federated Averaging (FedAvg):

$$\:{\theta\:}^{t+1}=\:\sum\:_{i-1}^{N}\frac{{|D}_{i}|}{\sum\:_{j-1}^{N}{|D}_{j}|}{\theta\:}_{i}^{t+1}$$

where:

The EPP-BCFL framework incorporates a robust model aggregation layer that enhances security, fairness, and efficiency in federated learning through three key mechanisms. First, the Privacy-Preserving Model Aggregation (PPMA) module employs secure multi-party computation (SMPC) and differential privacy to ensure that local model updates remain confidential. These techniques prevent adversaries from reconstructing or exploiting raw data, even if encrypted updates are intercepted during transmission. Second, the Adaptive Model Aggregation (AMA) mechanism dynamically adjusts the weight of each node’s contribution to the global model based on factors such as data quality, diversity, and representativeness. This helps to prevent bias in the learning process by granting greater influence to nodes with more valuable or balanced datasets, thereby improving the overall generalizability of the model. Third, the integration of blockchain technology guarantees data integrity and trust among participants. Each model update’s hash is stored on the blockchain, creating a tamper-proof and auditable trail of contributions. Additionally, smart contracts are employed to govern the aggregation process, enforcing rules that promote fairness, transparency, and verifiability without centralized control. Together, these mechanisms significantly enhance the scalability, security, and robustness of the federated learning system while ensuring efficient and privacy-preserving model convergence across diverse and distributed environments.

Blockchain network layer (decentralized ledger and security management)

The proposed EPP-BCFL framework incorporates several key functions to enhance the security, efficiency, and reliability of federated learning in decentralized healthcare environments. One of its core functionalities is decentralized model update storage, where each local model update is hashed and recorded on the blockchain. This ensures an immutable audit trail, eliminating the possibility of tampering and protecting the global model from poisoning attacks. In addition to preserving integrity, the system offers strong security against adversarial behaviors. Smart contracts are employed to validate the authenticity and consistency of updates from participating edge nodes before they are included in the aggregation process. This validation step, combined with a Byzantine Fault Tolerance (BFT) mechanism, ensures that the system remains robust even when some nodes behave maliciously or are compromised. To further enhance operational efficiency, the framework adopts a hybrid Proof-of-Stake (PoS) and BFT consensus protocol.

Each participating node i generates an update \(\:{\theta\:}_{i}^{t+1}\) and computes a cryptographic hash:

$$\:{H}_{i}=Hash\left({\theta\:}_{i}^{t+1}\right)$$

where \(\:{H}_{i}\) is stored on the blockchain ledger for verification.

The probability \(\:{P}_{i}\) of a node i being chosen as a validator depends on its stake \(\:{S}_{i}\) relative to the total stake in the network \(\:{S}_{total}\):

$$\:{P}_{i}=\frac{{S}_{i}}{{S}_{total}}$$

This ensures fairness and reduces centralization risks.

For consensus to be achieved, more than 2/3 of nodes must agree on the same model update:

$$\:\sum\:_{i=1}^{N}I\left({V}_{i}={H}_{i}\right)>\:\frac{2N}{3}$$

where \(\:{V}_{i}\) represents the validator’s decision and \(\:\:I(.)\)is an indicator function that checks if the update matches the stored hash.

Lightweight PoS-BFT mechanism

The EPP-BCFL framework employs a lightweight Proof-of-Stake Byzantine Fault Tolerance (PoS-BFT) consensus protocol to achieve secure, efficient model update verification. Unlike traditional PoS-BFT protocols that require high computational and communication costs, this approach introduces two optimizations. First, the use of a reduced validator set—a small subset k ≪ N selected based on node stake and recent activity—minimizes communication overhead and improves processing speed without compromising decentralization. Second, the optimized BFT threshold achieves consensus when more than 2/3 of the selected validators agree on an update, thereby maintaining Byzantine fault tolerance with significantly fewer consensus messages. This lightweight structure is well-suited for edge environments, reducing latency and ensuring the cryptographic verification complexity remains O(1) per update, while overall consensus complexity is reduced to O(k²), which is computationally manageable for small k (e.g., 5 or 7).

In the proposed PoS + BFT hybrid consensus mechanism, the stake of each validator node is quantified based on a composite trust score that incorporates both historical participation metrics (e.g., uptime, accuracy in model updates) and a predefined token-based stake. This ensures a balanced selection process that discourages malicious behavior while maintaining decentralization. To mitigate the risk of centralization, we introduce a cap on the maximum allowable stake contribution from any single entity.

Regarding fault tolerance, the hybrid model inherits the safety property of BFT, which can tolerate up to \(\:f=\lfloor\:(n-1)/3\rfloor\:\). Byzantine nodes out of n total nodes. However, if the proportion of malicious nodes exceeds 1/3, the system initiates a fallback mechanism that includes:

-

temporary suspension of consensus,

-

triggering an external audit via a secondary consensus layer with higher trust nodes,

-

and stake redistribution penalties to deter collusion.

The Efficient Privacy-Preserving Blockchain-based Federated Learning (EPP-BCFL) Framework enhances security, efficiency, and privacy in federated learning by integrating blockchain technology into the model training process. It begins with local training at edge nodes—such as user devices or IoT sensors—where models are trained using private data without sharing raw inputs. Each node generates a cryptographic hash of its model update, encrypts it, and transmits it to the Federated Model Aggregation Layer, while the hash is stored on the blockchain to ensure integrity. A Proof-of-Stake (PoS) mechanism selects validator nodes based on their stake, providing energy-efficient consensus. Validators verify updates by matching them with stored hashes, and if at least two-thirds agree, the update is accepted through Byzantine Fault Tolerance (BFT) and recorded on the ledger. Verified updates are then aggregated using a weighted averaging method that accounts for data distribution, and the global model is securely stored and distributed to edge nodes for the next training round. This decentralized approach removes the risks of centralized aggregation, ensures tamper-proof auditing, and defends against model poisoning attacks. With optimized complexity (PoS: O(N), hash verification: O(1), aggregation: O(N)), EPP-BCFL is scalable and robust for real-world federated learning scenarios.

Time complexity analysis

The most computationally intensive phase is the local model training at each edge node, which involves training a neural network on private data. This results in a complexity of \(\:O(N\:\times\:\:E\:\times\:\:D\:\times\:\:M),\) accounting for multiple epochs across all edge nodes. The cryptographic hash computation and encryption add an additional O(N × M) cost.

In the validator selection phase using Proof-of-Stake (PoS), each validator computes a selection probability, which is a linear operation, resulting in a time complexity of O(N). The selected validators proceed to the Byzantine Fault Tolerance (BFT) verification stage, where hash verification and inter-validator communication are performed. This contributes an additional \(\:O(kM\:+\:k^2)\) time complexity, with k representing the number of validator nodes.

The global model aggregation phase requires weighted averaging of model updates from all participating nodes, incurring a complexity of \(\:O(N\:\times\:\:M)\). Finally, distribution of the global model back to the edge nodes also requires O(N × M) time. Summing all stages, the overall time complexity of the EPP-BCFL framework can be represented as:

$$\:O(N\times\:E\times\:D\times\:M+kM+k^2)\:$$

This complexity indicates that the framework scales linearly with the number of nodes and dataset size during local training, and quadratically with the number of validators in the BFT phase. However, since k is typically small and constant (e.g., k = 5), the quadratic term has a minimal impact on scalability. Thus, the framework remains computationally efficient and well-suited for distributed, privacy-preserving federated learning environments.

Sequence of applying differential privacy (DP) and homomorphic encryption (HE)

In our proposed framework, Differential Privacy (DP) is applied prior to Homomorphic Encryption (HE) at the edge nodes. This ensures that noise introduced for privacy preservation does not interfere with the encryption or decryption process. Specifically, DP perturbation is added to model updates or gradients, and the differentially private data is subsequently encrypted using HE before transmission, preserving both privacy and encryption integrity.

Coordination across heterogeneous edge devices

To manage heterogeneous edge environments, we implement an asynchronous training strategy coordinated by a centralized federated controller. Edge devices operate independently according to their computational capacity, with local training schedules dynamically adjusted. The controller adopts an adaptive aggregation mechanism that collects updates within a flexible time window and employs a learning rate adaptation scheme to ensure convergence across non-IID and variably available clients.

Privacy preservation and secure aggregation module

In our proposed EPP-BCFL framework, Differential Privacy is applied at the edge node level before the generation of ZKPs. The local model gradients or parameters are first perturbed using DP (adding continuous noise), and then these perturbed values are discretized (e.g., fixed-point or scaled integers) to ensure compatibility with integer-based ZKP schemes. This discretization preserves the DP guarantees within a bounded precision range and allows the subsequent ZKP protocols implemented using efficient zk-SNARKs to verify the integrity of the model updates without accessing raw data.

link

![Average Cost of Medical School [2025]: Yearly + Total Costs Average Cost of Medical School [2025]: Yearly + Total Costs](https://educationdata.org/wp-content/uploads/2025/09/page-1.png)