Improving CNN predictive accuracy in COVID-19 health analytics

This section presents the methodological framework developed to support a CNN-based model for COVID-19 severity classification using multimodal healthcare data. Given the complexity and clinical significance of the task, the methodology was designed to ensure both predictive accuracy and medical relevance. Our approach integrates diverse data sources—including chest imaging, vital signs, laboratory biomarkers, and patient histories—acquired from electronic health records (EHRs). Each stage of the model development pipeline, from data collection and preprocessing to model design, training, and evaluation, was carefully structured to address challenges such as class imbalance, data variability, and clinical interpretability. We employed advanced techniques such as transfer learning, hierarchical feature extraction, and class-weighted loss functions, alongside rigorous performance validation using statistical metrics and expert review. The following subsections provide a detailed account of the processes and strategies implemented to develop a robust, generalizable, and clinically meaningful COVID-19 severity prediction model.

Methodological approaches in CNN-based COVID-19 predictive analytics

Our methodological strategy provides a structured approach to harnessing CNNs for COVID-19 predictive analytics. By systematically addressing the data, architectural, and clinical challenges inherent in healthcare AI applications, our framework emphasizes both technical robustness and translational relevance.

Integrated data acquisition and quality governance

To support reliable CNN modeling, we implemented a multimodal data acquisition protocol encompassing chest X-rays, CT scans, and structured clinical variables from electronic health records (EHRs), including vital signs, biomarkers, and comorbidity data. Our dataset was stratified across severity levels to prevent demographic or temporal sampling bias. A layered quality assurance pipeline was employed, integrating inter-annotator agreement checks, automated label consistency scans, and radiologist-assisted review of diagnostic outliers.

Advanced preprocessing and data augmentation techniques

Preprocessing included normalization of imaging data to mitigate scanner and protocol heterogeneity, combined with artifact removal and spatial standardization. We utilized advanced augmentation techniques such as GAN-generated synthetic imaging, Gaussian noise injection, and intensity transformations to combat class imbalance and data sparsity40,41,42. These methods were rigorously validated to avoid overfitting or clinical misrepresentation.

Hierarchical CNN architecture and transfer learning

Our CNN design follows a hierarchical structure comprising multiple convolutional layers (Conv2D with ReLU), max-pooling, and fully connected layers, supported by batch normalization and dropout for stability and generalization. Pre-trained backbones such as ResNet5043,44 and EfficientNet-B345,46 were used as part of a transfer learning approach, which significantly boosted learning efficiency on limited COVID-19 datasets. Attention modules were included to emphasize radiologically salient regions.

Model optimization and training protocols

We adopted a robust hyperparameter tuning strategy using grid search and 5-fold cross-validation, optimizing parameters like learning rate, dropout rate, and filter count47,48. Stratified, patient-wise data splitting was used to prevent leakage. Class imbalance was mitigated via weighted cross-entropy loss, with severity-specific class weights calibrated to clinical importance (e.g., critical: 2.5, mild: 0.5).

Evaluation metrics and statistical validation

To evaluate our model, we utilized a broad array of performance indicators, including accuracy, precision, recall, F1-score, specificity, and AUC-ROC, each presented with 95% confidence intervals49,50. The CNN model demonstrated high diagnostic efficacy with 97.2% accuracy and an AUC of 0.987 in binary classification settings. For severity classification across four categories, the model showed promising results with the highest F1-score (77.25%) for the critical class.

Multimodal fusion and temporal modeling

To capture complex patient trajectories, we employed a hybrid data fusion approach combining early (feature-level) and late (decision-level) fusion strategies. Temporal encoding of disease progression, extracted from sequential clinical records, enabled the model to capture longitudinal patterns predictive of severity escalation.

This comprehensive methodological framework provides a systematic approach for evaluating and addressing the multifaceted challenges associated with CNN deployment in COVID-19 predictive analytics, emphasizing model robustness, and clinical relevance while addressing the unique constraints and requirements of healthcare applications.

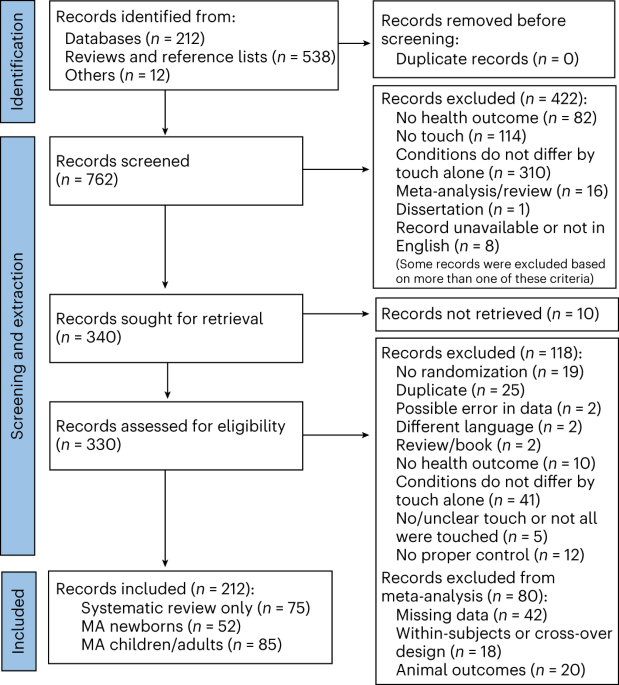

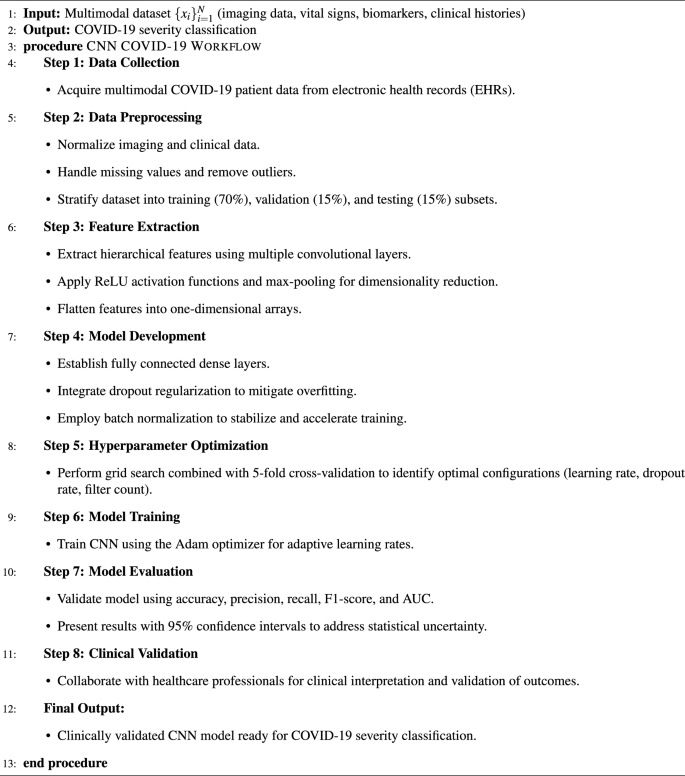

Proposed CNN architecture.

CNN-based framework for COVID-19 severity classification

This subsection thoroughly describes the step-by-step methodology implemented for developing a CNN-based classification model for predicting COVID-19 severity using multimodal EHRs. Each step is explicitly detailed, providing clarity and ensuring methodological rigor and clinical relevance. Figure 1 illustrates the proposed CNN architecture for COVID-19 severity classification, detailing the sequential flow from data collection and preprocessing through hierarchical feature extraction, classification layers, and final output generation. Algorithm 1 outlines the step-by-step workflow of the proposed CNN-based framework for COVID-19 severity detection, encompassing multimodal data collection, preprocessing, feature extraction, model development, optimization, evaluation, and clinical validation to ensure robust and interpretable predictions.

Step 1: Comprehensive Data Collection: The initial step involved systematically collecting a robust multimodal dataset from COVID-19 patient electronic health records (EHRs). The dataset comprised various data modalities, including radiological imaging data such as chest X-rays and CT scans, essential clinical vital signs like body temperature and oxygen saturation, critical laboratory biomarkers (e.g., C-reactive protein levels, leukocyte counts), and detailed medical histories capturing pre-existing conditions and previous treatments. This extensive data collection process ensured comprehensive patient representation and supported robust predictive modeling51,52,53.

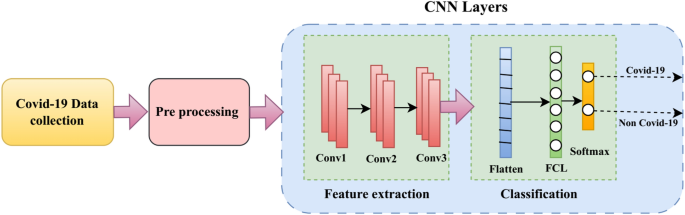

CNN-Based Framework for COVID-19 Detection.

Step 2: Rigorous Data Preprocessing: Subsequently, a rigorous data preprocessing phase was conducted to ensure data integrity and reliability. This step included normalization techniques applied uniformly across different data modalities to standardize scales and improve computational efficiency. Additionally, preprocessing involved meticulous handling of missing data through appropriate imputation strategies and the removal of outliers to enhance the accuracy and stability of subsequent analytical processes.

Step 3: Hierarchical Feature Extraction: In the feature extraction phase, the CNN architecture was employed to capture hierarchical representations from the multimodal EHR data. Multiple convolutional layers were utilized to systematically identify and extract complex, hierarchical patterns inherent in medical imaging and clinical data. Rectified Linear Unit (ReLU) activation functions were applied after each convolutional layer to introduce necessary non-linear transformations, enabling the CNN to effectively model intricate relationships within the data. Max-pooling layers followed convolutional operations to achieve dimensionality reduction, emphasizing the most prominent and salient features. Extracted features were subsequently flattened into one-dimensional feature arrays to prepare for input into the fully connected layers.

Step 4: Strategic Model Development: During model development, fully connected dense layers were systematically designed to process and interpret extracted features, generating actionable classification outputs. Dropout regularization was strategically integrated into the dense layers, effectively reducing the risk of model overfitting by randomly omitting neurons during training, thereby enhancing the model’s generalization capabilities. Additionally, batch normalization layers were employed to stabilize learning dynamics, accelerate convergence, and improve the efficiency of the training process.

Step 5: Comprehensive Hyperparameter Optimization: A comprehensive hyperparameter optimization phase was implemented, utilizing a grid search approach combined with 5-fold cross-validation. Multiple hyperparameters—including learning rate, dropout rates, and convolutional filter counts—were systematically tested in various combinations. This exhaustive optimization ensured the identification of an optimal model configuration, which maximized predictive performance while ensuring robust generalization to unseen datasets.

Step 6: CNN Model Training: The CNN was trained using the Adam optimizer, chosen specifically for its adaptive learning rate capabilities and computational efficiency. Adam dynamically adjusts the learning rate throughout the training process, facilitating effective convergence and substantially enhancing predictive accuracy. Training continued iteratively until optimal performance metrics were consistently achieved on the validation dataset.

Step 7: Robust Model Evaluation: Model evaluation was meticulously conducted using a suite of established performance metrics. Each metric was reported with 95% confidence intervals to quantify and transparently communicate statistical uncertainties inherent to the limited and complex nature of the COVID-19 dataset.

Step 8: Clinical Validation and Expert Collaboration: The final phase involved rigorous clinical validation through collaboration with healthcare professionals. Clinical experts evaluated and interpreted the model’s outputs, confirming their clinical relevance and practical utility. This step ensured that the predictive outcomes provided by the CNN model were actionable, medically meaningful, and aligned with established clinical standards.

By adhering to this detailed, step-by-step methodology, the CNN-based framework offers a robust, clinically validated approach for accurately classifying COVID-19 severity, significantly enhancing decision-making capabilities within clinical settings.

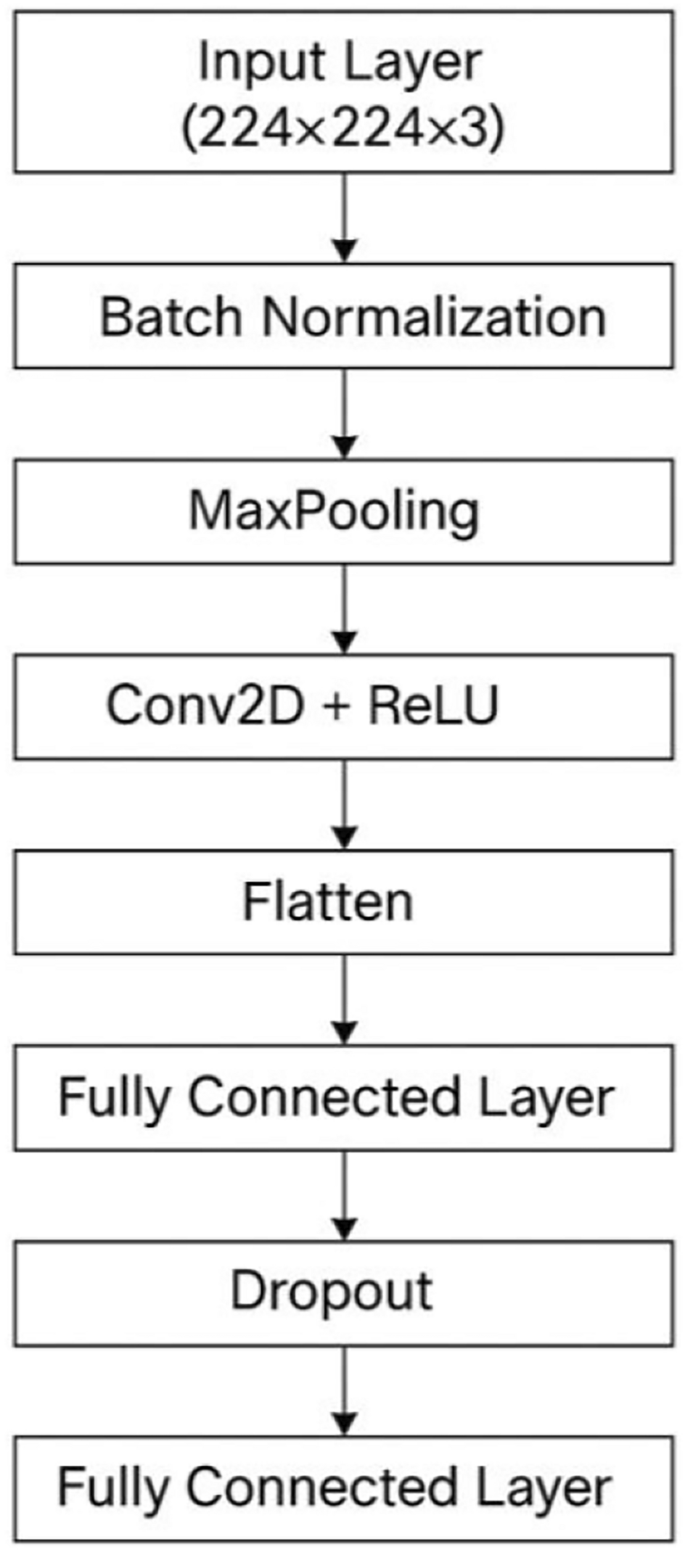

Overview of CNN layers

In this subsection, we provide an in-depth analysis of the CNN layers designed specifically for the severity classification of COVID-19 using medical imaging data. The CNN architecture, depicted in Figure 2, encompasses a systematic arrangement of layers that collectively perform feature extraction, dimensionality reduction, regularization, and final prediction tasks.

The initial layer, known as the Input Layer, accepts RGB medical images standardized to dimensions of \(224\times 224\times 3\) pixels. This standardized input size ensures uniformity and facilitates efficient training and processing across the network. Following the input stage, the Batch Normalization layer is integrated. This layer significantly contributes to stabilizing the learning process by normalizing the inputs to each mini-batch. It reduces internal co-variate shifts, leading to accelerated convergence and enhanced generalization capability of the model. The subsequent Max-pooling layer, employing a \(2\times 2\) pooling window, systematically reduces spatial dimensions of the input feature maps. This step is crucial in highlighting and preserving the most salient features while minimizing computational complexity, thereby effectively preventing overfitting and enhancing the robustness of feature representations.

The core computational power of the CNN lies within the Convolutional Layer, where a \(3\times 3\) filter size is used along with the Rectified Linear Unit (ReLU) activation function. This layer efficiently captures local spatial patterns in the imaging data, transforming raw pixel information into meaningful hierarchical feature maps. The ReLU activation introduces necessary non-linearities, enabling the model to learn complex data representations crucial for accurate severity classification. Next, the Flatten Layer transforms the multi-dimensional feature maps from convolutional operations into a one-dimensional feature vector. This transformation is vital as it prepares the extracted features for processing by subsequent fully connected layers, facilitating seamless data flow within the network. The Fully Connected Layer, activated by ReLU, serves as the intermediate processing stage, integrating and interpreting features captured and extracted by preceding layers. It systematically connects neurons from the flattened layer, synthesizing high-level representations that enhance the predictive capacity of the model.

To address potential overfitting and improve the generalizability of the model, a Dropout layer is strategically included. Dropout involves randomly deactivating a subset of neurons during training with a regularization rate typically between 0.3 and 0.5, thus ensuring that the network learns robust features without overly relying on specific neurons or paths. Finally, another Fully Connected Layer employing a Softmax activation function produces the output, categorizing the severity levels of COVID-19. The Softmax activation effectively transforms the model’s outputs into probabilities, enabling clear classification decisions aligned with clinical severity categories. This comprehensive and carefully structured CNN framework ensures accurate, reliable, and clinically meaningful COVID-19 severity classification, facilitating informed decision-making and timely patient interventions in healthcare settings. Table 2 details the specific configuration and functionality of each layer, including input dimensions, convolutional parameters, activation functions, and regularization strategies employed to optimize model performance and generalization.

Optimization strategies for performance enhancement

In the pursuit of improving the performance of our CNN model for the task of classifying COVID-19 chest X-ray images, a series of strategic optimizations were implemented across multiple facets of the model development process. These optimizations were systematically applied to four key areas: data preparation, model architecture, training process, and loss function adjustment. The combination of these strategies enabled us to significantly enhance the accuracy, generalization, and overall performance of the model. Table 3 summarizes the comprehensive optimization strategies applied to enhance the CNN model’s performance in COVID-19 severity classification, detailing improvements in data preparation, architectural design, training procedures, loss function adjustments, and reporting the resulting metrics that validate its clinical applicability.

Data Preparation and Augmentation: Data quality and diversity are fundamental to the success of any deep learning model, particularly when dealing with imbalanced datasets such as medical images of varying severity. To improve the robustness and generalization capabilities of our CNN model, we employed several image augmentation techniques. These techniques included random rotations, zooming, brightness adjustments, and the addition of noise reduction methods. These transformations helped to artificially expand the training dataset, ensuring the model was exposed to a wide variety of image patterns and reducing its susceptibility to overfitting. In addition to these transformations, we implemented stratified sampling and oversampling techniques. Stratified sampling ensured that the distribution of classes within the training and validation sets reflected the real-world distribution, thus mitigating potential bias towards the more dominant classes. Meanwhile, oversampling was applied specifically to underrepresented classes, such as severely ill COVID-19 patients, to ensure the model learned to recognize these critical but less frequent conditions. This careful handling of the dataset was pivotal in achieving balanced learning across all categories.

Enhancements to Model Architecture: The CNN’s structural design represented a critical optimization area. To improve the network’s capacity for learning intricate patterns from chest radiographic data, we implemented multiple architectural modifications. Batch normalization enhancement: We incorporated batch normalization to stabilize and accelerate the learning process by standardizing inputs across each processing stage, thereby improving convergence and mitigating problems like gradient vanishing. This incorporation was crucial in enabling more efficient model training, particularly with deeper network architectures. Additionally, we integrated dropout mechanisms with a 0.4 probability rate. This regularization approach prevents model overfitting by stochastically disabling certain neurons during training. This technique compelled the network to develop more resilient pattern recognition capabilities and decreased reliance on specific neuron combinations, thus improving generalization performance. Furthermore, we utilized transfer learning by incorporating pre-trained ResNet components. This knowledge transfer approach enabled us to leverage pattern recognition capabilities acquired from extensive image datasets such as ImageNet. Given that medical imaging often demands specialized pattern detection methods, employing pre-trained models allowed our CNN to benefit from these learned parameters, particularly for identifying subtle characteristics in radiographic images that would otherwise be difficult to detect without prior training. This knowledge transfer strategy substantially enhanced model performance, especially regarding accuracy and pattern representation.

Training Process and Fine-Tuning: The training process was fine-tuned using a systematic approach to identify optimal hyperparameters and ensure the model’s robustness. We conducted a grid search to explore various combinations of learning rates, batch sizes, and activation functions, which allowed us to identify the best settings for our model. This thorough search process ensured that the model was trained with the most efficient set of parameters, maximizing its potential for accurate predictions. In addition to the hyperparameter optimization, we incorporated regularization techniques like early stopping and 5-fold cross-validation. Implementing early stopping minimized the chance of the model memorizing the training set by ending model optimization when validation results no longer improved, thereby maintaining how well the model performs on new data. Additionally, 5-fold cross-validation offered extra protection against overfitting by assessing how effectively the model operates using various separate portions of the dataset, which led to a more dependable assessment of its actual performance.

Loss Function Adjustment for Class Imbalance: One of the most critical challenges in medical image classification, particularly in the context of COVID-19, is dealing with class imbalance. Given the disproportionate number of severe versus mild cases in the dataset, the model could easily become biased toward predicting the majority class, resulting in poor performance for underrepresented classes. To address this, we modified the loss function by using weighted cross-entropy loss. This adjusted the loss function to penalize misclassifications of underrepresented classes more heavily, thereby encouraging the model to pay more attention to the critical yet less frequent cases, such as patients with severe COVID-19 symptoms. This approach ensured that the model was not only accurate but also sensitive to the needs of the less frequent but crucial categories.

The combination of these optimizations resulted in a CNN model that achieved impressive performance metrics. Specifically, the model achieved an accuracy of 97.2%, a precision of 96.8%, and an Area Under the Curve (AUC) of 0.987. These results demonstrate the effectiveness of our optimization strategies in improving the model’s overall classification ability, as well as its reliability in distinguishing between various levels of COVID-19 severity. The high AUC score is particularly notable, as it indicates that the model is well-calibrated, making it a dependable tool for real-world applications in medical image classification.

By incorporating these strategies, we were able to significantly enhance the performance of our CNN model for classifying COVID-19 chest X-ray images. Each optimization step—ranging from data augmentation and model architecture modifications to fine-tuning and loss function adjustments—contributed to building a robust model capable of handling the complexities of medical image classification. The combined impact of these improvements ensured that the model achieved both high accuracy and reliability.

link

![Average Cost of Medical School [2025]: Yearly + Total Costs Average Cost of Medical School [2025]: Yearly + Total Costs](https://educationdata.org/wp-content/uploads/2025/09/page-1.png)