Integrating advanced neural network architectures with privacy enhanced encryption for secure and intelligent healthcare analytics

The approach used in this research is a multi-dimensional that focuses on improving healthcare data analytics, ensuring safety and privacy. First, the neuroshield model is presented, a combination of LSTM network with cascade CNN to effectively catch spatial and temporary features in health care data. Missing data treatment is controlled by KNN copy of KNN to complete data. Strong encryption techniques like the AES are employed to protect sensitive healthcare data, supported by access control processes like ABAC policies and MFA to manage data access. Additionally, Differential Privacy-based Optimization algorithms are used to maintain individual privacy during model training over sensitive healthcare data. The overall methodology supports strong data analysis with the protection of patient confidentiality and compliance with privacy laws. Figure 2 illustrates the architecture of the proposed model.

Dataset collection

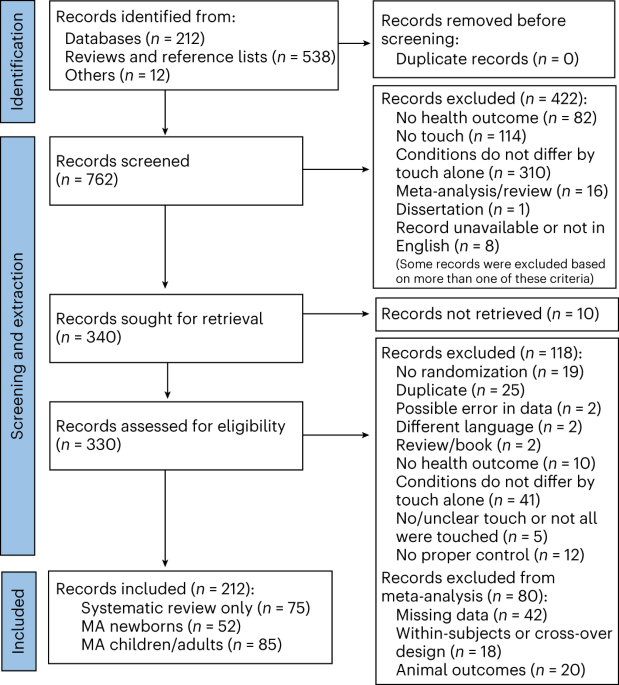

The model is trained with a healthcare dataset containing synthetic patient records, consisting of variables ranging from demographics and medical history to treatment information. With 10,000 observations amounting to synthetic patient healthcare records, the Healthcare Dataset available on Kaggle is a dataset paradise27. Patient demographic information, medical history, and admission details and so on, are some of the variables used. For the purpose of health-related analysis and modeling, the large dataset proved to be useful. Researchers can enhance health care delivery and patient care procedures by delving into patient characteristics, diseases, interventions, and results. Medical care organizations employ the dataset for augmented strategic planning and quality enhancement programs by learning from admission patterns, insurance cover, and practitioner performance, to mention a few. Predictions of patient admissions, billing levels estimation, and disease outcomes projections become simpler to advanced statistical instruments such as predictive modeling. This subsequently results in improved provision of healthcare services. To ensure the privacy and confidentiality of patients during analysis, it is essential to responsibly manage data by conducting adequate preparation and adhering to all the relevant laws.

Data preprocessing and cleaning

Anonymize personally identifiable information (PII)

To ensure patient privacy, individually identifying information (PII) is unknown through methods such as pseudo naming and data normalization. For example, the patient’s names are replaced with unique identifiers, and the exact date of birth is normalized in age.

Architecture of proposed model.

When scrubbing the healthcare database of such information, one must take into account the sensitive nature of individually identified information (PII). Personally identifiable information (PII) has a wide range, which can be employed to identify a person, such as names, addresses, social security numbers and medical records. To meet the requirements such as HIPAA patient privacy by organizations must be maintained, which ask for the complete or effective neutrality of individual identified data. To achieve this balance between preserving data utility and security of privacy, methods of approval are paramount. Such methods preserve the analytical utility of the dataset by using pseudonym or by normalizing the data. One of the ways to protect the privacy of patients allowing effective data analysis is to give them a special identity rather than using their name directly. Similarly, the exact dates of birth can be replaced with more common age groups to hide the identity of people without abandoning the utility of the dataset. This systematic process of preserving healthcare data in healthcare, beyond patient privacy, regulatory in healthcare and research in analysis, is meaningful for modeling and analysis.

Handle missing values and outliers

In healthcare data analysis, the accuracy and reliability of analytical models depends on the outlier and elimination of missing values. The absence of action on the outlier, most comments and observation away from missing values (usually as a result of data collection or errors in incomplete data) can have a major impact on the performance and accuracy of analytical models. The KNN imperfection method is applied to change the missing values. This technique estimates the missing data points based on the values of the nearest neighbors in the dataset, ensuring data perfection without loss of integrity. To apply missing values and preserve dataset, KNN employs similarities between data examples. Similarly, the outlier must be treated with excessive attention to not slant the results of the analysis. Not treating these unusual data points properly can slant statistical projections and confuse the model interpretations. Various techniques can be employed in outliers handling. Statistical techniques identify the outliers and solve them using functionalization such as historicization to reduce their impact on the model. Data is done to map numerical characteristics for a uniform range, which increases the stability of nerve network training.

In the management of the healthcare dataset, which contain outliers, historicization is a significant method to minimize their influence while preserving sample distribution. There are outlier observations that are very dissimilar from other remarks, and they can bias figures and model outcomes. Winsorization offers an effective solution by minimizing the effect of the outlier without entirely removing the extreme values at a certain percentage. Winsorization keeps outliers from adding uneven influence on overall distribution by setting the upper and lower boundaries in terms of percentage requirements. By tapering the effect of the outliers, this process keeps the dataset representative of the vast population. Through Winsorization, analysts can keep the accuracy and reliability of the dataset intact while reaching meaningful insights and while making well-informed with healthcare data.

Apply data transformation

Raw data must be subjected to data modification procedures in order to analyse and model healthcare data effectively. These procedures are crucial for normalising data structures, improving the quality of analytical models, and guaranteeing the operation of machine learning algorithms. Obtaining numerical properties within a broad range, like 0 and 1 or between 0 and standard deviation 1, is the goal of normalisation, a fundamental approach of change. The purpose of generalization is to compare different quantities to prevent variables with different quantities. Incorrectly by normalizing numeric data. This avoids the presence of some characteristics dominating the study. In addition to enhancing the convergence and stability of the machine learning algorithms, it also ensures that all characteristics have a similar effect on the model.

In addition, for non-refined data analyzed by machine learning algorithms, the range is to be encoded. A widely used approach, binary encoding, represents a category using a binary digit in the vector of the range. This approach to encoding by preserving gradual relations between categories is capable of effectively representing category information. All fundamental data types of healthcare dataset can be deepened by using binary encoding, which re -encodes the classified variable in understanding a form machine learning algorithm. Overall, these changes ensure that dataset is ready for analysis, even if it includes data types or scales. This method improves analytical model convergence and performance by reducing the effects of scale and category variables. The result is that analysts can use data processed to draw more accurate conclusions and make better decisions. With these methods of change, health data analysts can more customize the implementation of machine learning algorithms for customized patient care, treatment and health management. It has more relevance within health data that is often inhuman type and form.

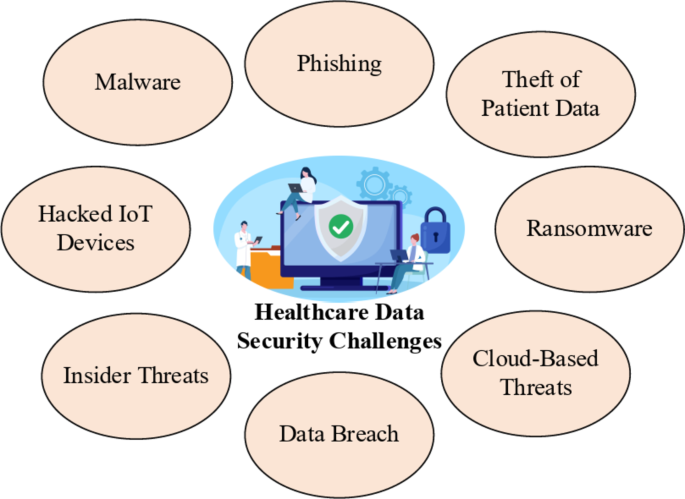

Data encryption and access control

To protect the information of a confidential patient from unauthorized visual and disastrous manipulation, there is a need to establish strong encryption of data and access control in the field of health data protection. The data encryption uses cryptography techniques to turn on the plaintext in unlimited ciphertexts as a means of making data illegal for any external party. It is alert to this mode of encryption to protect confidential health information during transmission or storage. Medical facilities can protect the patient’s information and follow standards like GDPR and HIPAA by adopting strong encryption techniques. The model encrypts sensitive health information using AES. Encryption converts plaintext information (e.g., medical history) into ciphertext using an encryption key. This protects the data both in transit and when it is at rest. This will protect the data against breaches and unauthorized interception. Figure 3 shows the flowchart of data security.

Encryption

$$\:C={E}_{k}\left(P\right))$$

(1)

Where \(\:C\) represents the ciphertext obtained by encryption plaintext \(\:P\) using encryption key \(\:k.\)

Decryption

$$\:P={D}_{k}\left(C\right)$$

(2)

Where \(\:P\) represents the original plaintext obtained by decryption ciphertext \(\:C\) using decryption key \(\:k.\)+

Data access can, therefore, be better managed through stringent access control practices. “Access control” involves applying rules and procedures to limit access to a health care system based on predetermined roles and privileges. To support relevant people with good intentions being able to receive information, health organizations need to provide specific permissions for all types of user groups, such as clinicians, administrators, and support workers. Attribute-based access control (ABAC) is one of the most common ways to enforce access restrictions based on users, organizational structures and contextual properties. Health organizations can use strong access controls to ensure patient information is safeguarded. These measures help prevent insider threats, inadvertent data exposure, and access to sensitive information.

Access control

$$\:{A}_{i,j}=\left\{\begin{array}{c}1\:\:\:\:\:\:\:\:\:\:\:if\:user\:i\:has\:access\:to\:data\:item\:j\\\:0\:\:\:\:\:\:\:\:\:\:\:\:otherwise\:\:\:\:\:\:\:\:\:\:\:\:\:\:\:\:\:\:\:\:\:\:\:\:\:\:\:\:\:\:\:\:\:\:\:\:\:\:\:\:\:\:\:\:\:\:\:\end{array}\right.$$

(3)

It defines the access control matrix \(\:A\), where \(\:{A}_{i,j}\) denotes whether user \(\:i\) has access to data item \(\:j.\)

Attribute-based access control (ABAC) policy

Data access is governed by ABAC policies. This means only users that have been explicitly given certain roles and permissions will have access to the encrypted data as well, providing an additional security layer to the whole data encryption process.

$$\:Permit=f\left(Attributes\right)$$

(4)

This is the function “f” that returns whether access to a resource is allowed or not depending on the requesting entity’s attributes.

Apart from safe authentication methods like MFA, healthcare also supports data system. MFA signifies the risk of unauthorized access through the credibility of fishing or theft, which requires users to employ many approaches to authentically identify themselves, such as biometric readings, cryptographic tokens, or passwords. By integrating the MFA to their certification procedures, healthcare providers can protect the confidentiality of their data, reduce the risk of unauthorized access, and can increase their flexibility for emerging hazards in the cyber security scenario.

Multi-factor authentication (MFA)

$$\:{P}_{Auth}=MFA\left({P}_{Password},{P}_{Biometric},{P}_{Token}\right)$$

(5)

It represents the MFA process where \(\:{P}_{Auth}\) denotes the authentication result based on the password \(\:{P}_{Password},\) biometric scan \(\:{P}_{Biometric},\) and cryptographic token \(\:{P}_{Token}.\)

This fully applied data encryption, access controls and secure authentication processes ensure that sensitive healthcare information is protected from cyber-attacks, data breaches and unauthorized access. By adopting end-to-end data security healthcare companies can help to protect patient confidentiality, comply with regulations, and maintain the integrity free from which the healthcare system cannot function.

Anonymization

Advanced anonymization techniques are based on the fact that they are essential in health care datasets to protect the privacy of patients it allows the utility of data to be preserved, and the privacy of individuals to be protected. Differential privacy is an advanced method that attempts to find a balance between the two. The concept of differential privacy, which is either a particular method of adding calibrated noise to a query results or data releases, in order to ensure that the outcome of those studies, or searches, is not greatly affected by the existence or absence of any particular individual’s data. Re-identification is countered by differential privacy, which also ensures that datasets remain potent for analytical and modeling work. These systems work like this: They introduce noise to the dataset in a way that makes it effectively impossible to tell whether any particular individual’s data is included, by still allowing us to use the dataset to get meaningful aggregate information. This “privacy-preserving data analysis” is useful in all sorts of situations—none more so than healthcare.

Furthermore, l-diversity is a robust anonymization technique employed to enhance the privacy protection of anonymized data. To minimize the risk of re-identification through attribute disclosure, l-diversity ensures that each sensitive attribute in the data has a minimum of “l” distinct values. The l-diversity algorithm minimizes the chance that an attacker can identify an individual on the basis of certain combinations of sensitive attributes by distributing the values in those characteristics. By rectifying the weakness in traditional anonymization techniques that enable attribute-based attacks to be successful, our approach significantly enhances anonymized healthcare datasets’ privacy resistance. The integration of l-diversity and differential privacy in anonymization solutions enables healthcare organizations to preserve patient anonymity while supporting research and data analysis to enhance patient outcomes and healthcare delivery.

Differential privacy:

$$\frac{{P_{r} \left[ {{\mathcal{M}}\left( D \right) \in S} \right]}}{{P_{r} \left( {{\mathcal{M}}\left( {D^{\prime } } \right) \in S} \right)}} \le \exp \left( \varepsilon \right)$$

(6)

This is the definition of differential privacy, where M is a randomized mechanism, D is the data set, D’ is a neighboring data set (one data point different from it), S is a set of potential outputs, and ∈ is the privacy parameter regulating the amount of privacy protection.

1-Diversity:

$$\:{\forall\:}_{i}\in\:S:\left|QI{D}_{i}\right|\ge\:l$$

(7)

This guarantees 1-diversity, and \(\:QI{D}_{i}\:\)denotes the sensitive attribute values (Quasi-Identifiers) in dataset S, and l is the requirement of minimum diversity. This guarantees that every sensitive attribute includes at least l different values.

Differential privacy-based optimization

Implementation of advanced optimization algorithms is a crucial method to facilitate relevant conclusions with tight privacy regulations in the analysis of healthcare data, whose patient privacy protection is extremely necessary. One advanced technique of optimization is differential privacy-based optimization that is increasingly valuable in training machine learning models over sensitive healthcare information to ensure personal privacy protection. By adding exactly calibrated noise to the learning process, differential privacy-based optimization prevents the model’s parameters and predictions from being disproportionately influenced by the addition or deletion of any single individual’s data. Avoidance of illegal disclosure or re -identification of patients, machine learning models can be trained on sensitive health care data by adding discriminatory secrecy barriers to the adaptation process.

Applying differential privacy-based adaptation is particularly important in the health care environment because data is usually very sensitive and contains genetic profiles, disease diagnosis and treatment history. Health care companies can fully use the machine learning algorithm without breaking the requirements of laws such as HIPAA by integrating the privacy-safe mechanism without compromising the privacy of patients or by integrating within direct adaptation. By preserving the individual data of patients and stakeholders to a maximum extent, this approach promotes moral data usage in healthcare analytics and facilitates academics and physicians to achieve beneficial insights from health data.Let \(\:\theta\:\) represent the parameters of a machine learning model, \(\:\mathcal{D}\) denote the sensitive healthcare dataset, and \(\:\mathcal{L}\left(\theta\:,\mathcal{D}\right)\) represent the loss function associated with training the model on the dataset. The objective of differential privacy-based optimization is to minimize the following objective function:

$$\:\underset{\theta\:}{\text{minimize}}\mathcal{L}\left(\theta\:,\mathcal{D}\right)+\frac{\in}{n}\cdot\:Sensitivity\:$$

(8)

Where ∈ is the privacy parameter controlling the level of privacy protection, \(\:n\) is the number of individuals in the dataset, \(\:Sensitivity\) represents the sensitivity of the loss function, i.e., the maximum change in the loss function’s output due to the inclusion or exclusion of a single individual’s data.

Also, by ensuring that patient privacy comes first during model training, differential privacy-based optimization fosters an ethical culture of data management and compliance with regulatory requirements. Healthcare companies ought to establish trust within their data-centric initiatives by setting privacy and analysis accuracy as a top priority. This will save them from data breaches, illegal access, and algorithmic discrimination. Healthcare organizations can harness machine learning’s disruptive potential while ensuring patients’ privacy and promoting responsible and ethical application of healthcare data to enhance patient care and population health outcomes through strategic implementation of sophisticated optimization techniques such as differential privacy-based optimization.

Proposed neuroShield model

Using a sophisticated model with a mixture of LSTM network and cascade CNN, the neuroshield model Kaggle Healthcare offers an innovative approach towards dataset learning. The inclusion of CNNs and LSTMS helps to increase the model in removing temporary and geographical nuances of health information. Thus, it becomes an effective tool in the hands of health -related decision makers to achieve actionable insights and have effective results. By combining the CNNs in a cascade manner with LSTMS, the NeuroShield model enables the model to take full advantage of the strength of both network structures. CNNs are skilled in extracting spatial information from dataset, so this is a reasonable option for healthcare dataset. NeuroShield models are capable of achieving such great feature extraction properties through the use of CNN, as its base layers. It enables the identity of important patterns and abnormalities that may indicate a comprehensive spectrum of medical conditions.

The capacity of the NeuroShield Model to identify healthcare data’s sequential patterns and temporal dependencies is strengthened by incorporating LSTM networks into CNNs. The time-series data analysis, such as patient vital signs, lab test results, and prescription records, is of extreme significance while handling the Healthcare Dataset. The incorporation of LSTM layers into the structure of the NeuroShield Model enables it to properly reflect patients’ health patterns over a period of time so that essential patterns and trends may be unearthed in terms of prediction.

CNN feature extraction

The model starts by feeding input data (such as medical images or formatted patient data) through CNNs. CNN layers extract the spatial features through the application of convolutional filters to detect structures, patterns, and anomalies in the data.

$$\:{X}_{CNN}={f}_{CNN}\left({W}_{CNN}*{X}_{input}+{b}_{CNN}\right)$$

(9)

Here, \(\:{X}_{input}\) represents the input data, \(\:{W}_{CNN}\) denotes the convolutional filter weights, \(\:{b}_{CNN}\) represents the bias term, \(\:*\) denotes the convolution operation, and \(\:{f}_{CNN}\) represents the activation function used in the CNN layers. This equation describes the process of feature extraction by the CNN layers, where spatial features are extracted from the input data.

There are several advantages to testing the Healthcare Dataset with the NeuroShield Model, which is a combination of CNNs and LSTMs. To begin with, the model can learn abstract representations of spatial features, such as anatomical features and pathological lesions, that are vital for accurate diagnosis and prognosis, by using the hierarchical features recovered by the CNNs in the early layers. As a result, the LSTM layers enable the model to monitor the variations of these spatial variables throughout time, which in turn enables it to capture how the disease is advancing, how good the treatment is, and how the patient is as a whole.

LSTM temporal modeling

The features that are extracted are then fed into LSTM networks, which learn temporal dependencies and sequential patterns in health data, for example, patient vitals over time.

$$\:{H}_{LSTM}={f}_{LSTM}\left({W}_{LSTM}\cdot\:{X}_{temporal}+{U}_{LSTM}\cdot\:{H}_{LSTM}^{\left(t-1\right)}+{b}_{LSTM}\right)$$

(10)

Here, \(\:{X}_{temporal}\) represents the temporal input data, \(\:{W}_{LSTM}\) and \(\:{U}_{LSTM}\) denote the weights matrices for the input and recurrent connections, respectively, \(\:{b}_{LSTM}\) represents the bias term, \(\:{f}_{LSTM}\) is the LSTM activation function, and \(\:{H}_{LSTM}^{\left(t-1\right)}\) represents the previous hidden state. It describes the temporal modeling process by the LSTM layers, capturing sequential patterns and dependencies in the input data.

Cascaded architecture within the NeuroShield Model also ensures the seamless combination and interaction between CNN and LSTM sub-modules and their information-based compliments in either domain. It equally enhances predictive capacity and robustness of the model and is indicative of its performance in reliable prediction on the Healthcare Dataset.

NeuroShield model output

The final output is generated by combining features extracted by CNN and LSTM layers and passing them through a fully connected layer with an appropriate activation function:

$$\:\widehat{Y}={f}_{output}\left({W}_{output}\cdot\:concat\left({X}_{CNN},{H}_{LSTM}\right)+{b}_{output}\right)$$

(11)

Here, \(\:\widehat{Y}\) represents the predicted output, \(\:{f}_{output}\) is the output activation function, \(\:{W}_{output}\) and \(\:{b}_{output}\) denote the weights and bias for the output layer, respectively, and \(\:concat\left({X}_{CNN},{H}_{LSTM}\right)\) represents the concatenation of features extracted by the CNN and LSTM layers. This equation describes how the features extracted by the CNN and LSTM layers are combined to generate the final output prediction.

In healthcare analytics, there is a versatile structure for a series of neuroshield model functions. For example, the model can analyze healthcare data and patient data over time to make accurate diagnosis, allowing early intervention in timely treatment plan and disease. NeuroShield model can avail the information of a longitudinal patient for future modeling, can enable more concentrated health care interventions and improves the use of resources through the patient’s results, disease progression and predictions of treatment efficacy.

In addition, the neuroshield model is capable of identifying external and stressful patients according to the risk of managing them and already interfere in, especially in complex and odd datasets. The NeuroShield model takes advantage of the joint capacity of CNN and LSTM to optimize the quality, effectiveness and efficiency of healthcare by enabling model analysts and providers to understand valuable insights from healthcare dataset. Responsible data management, such as proper pretense and following the rules, is essential for any analysis. In the use of a NeuroShield model to achieve meaningful insights from healthcare dataset, analysts can maintain their integrity and credibility by giving high priority to patient privacy and privacy and following regulatory guidelines such as HIPAA. NeuroShield model makes a great promise to improve healthcare analytics and patient results in real -life scenarios with advanced analytics and responsible data management.

Privacy-preserving on encrypted data

Privacy-protection solutions are required for healthcare analytics, especially when handling sensitive patient data. For example, Secure Multiparty Computation (SMC) and homomorphic encryption are examples of techniques that fall under the category of privacy conservation on encrypted data. These methods allow many people or parties to work together to analyze encrypted data while maintaining the privacy of original data. Homomorphic encryption is one of the cryptographic algorithms that eliminates the need to decry to the data before calculating it. As a result, data encryption can be maintained during analysis computation and conversion. Healthcare organizations can apply strict privacy rules and use homomorphic encryption to protect data exchange with analysts or other third parties. For example, when many health professional studies or research projects collaborate, the privacy of patients can be protected.

On the other hand, Secure Multipartial Computes (SMC), allows many participants to work unnamed together to calculate a function from their personal input. In the field of healthcare analytics, SMC makes it easy for various organizations to work simultaneously on data analysis tasks, protecting patient privacy. These include government agencies, medical facilities and research centers. This collaborative approach increases the usefulness of data in healthcare by facilitating cross-institutional analysis and research without endangering patient privacy. Privacy conservation on encrypted data, which combines homomorphic encryption with SMC, provides a strong way of analyzing collaborative analysis while protecting patient privacy. When many healthcare wants to check patient data simultaneously in an attempt to identify professional patterns or trends, for example, homomorphic encryption, for example, to encrypt data before sharing the data with third party may be used. The SMC protocol will therefore enable shared calculation on encrypted data, allowing knowledge to be extracted without disclosing sensitive materials.

Homomorphic encryption

$$\:{E}_{HE}\left(f\left(x\right)\right)=HE\left(x\right)$$

(12)

Here, \(\:f\left(x\right)\) represents a function applied to plaintext data \(\:x\), and \(\:{E}_{HE}\) denotes the homomorphic encryption function. The output \(\:HE\left(x\right)\) is the encrypted version of the data, allowing computations to be performed on the encrypted data directly.

Secure multiparty computation (SMC)

$$\:SMC\left(f\left({x}_{1},{x}_{2},\dots\:,{x}_{n}\right)\right)=f\left({x}_{1},{x}_{2},\dots\:,{x}_{n}\right)$$

(13)

Here, \(\:f\left({x}_{1},{x}_{2},\dots\:,{x}_{n}\right)\) represents a function applied to private inputs \(\:{x}_{1},{x}_{2},\dots\:,{x}_{n}\) from multiple parties. The SMC protocol guarantees that the function is calculated without disclosing individual inputs to any party, maintaining privacy while facilitating collaborative computations.

In addition, privacy protection for encrypted data complies with moral and legal requirements that controls how healthcare data is handled. Using privacy-conservation techniques, comprehensive analysis and research can still continue to meet strict requirements of laws such as HIPAA, which aims to protect patient privacy and privacy. Using these strategies, healthcare facilities show their dedication to maintain the patient’s rights and confidence. However, it should be revealed that enforcement of encrypted data requires intensive examination of technical intensity and its effects on the performance by implementing confidentiality conservation measures. An example is homomorphic encryption, which enters it due to computational burden, can slow down data processing and analysis. SMC processes may also require coordination and important computational resources. Therefore, businesses should consider the benefits and shortcomings of privacy security before these strategies behave in real healthcare settings.

Combined privacy-preserving

$$\:PPED\left(f\left({x}_{1},{x}_{2},\dots\:{x}_{n}\right)\right)={E}_{HE}\left(SMC\left(f\left(HE\left({x}_{1}\right),HE\left({x}_{2}\right),\dots\:,HE\left({x}_{n}\right)\right)\right)\right)$$

(14)

Here, \(\:PPED\) represents the privacy-preserving on encrypted data operation. The equation combines homomorphic encryption and SMC to perform collaborative computations on encrypted inputs \(\:HE\left({x}_{1}\right),HE\left({x}_{2}\right),\dots\:,HE\left({x}_{n}\right)\), ensuring privacy while enabling joint analysis on encrypted data.

Secure multiparty computation and homomorphic encryption are two techniques that enable cooperative analysis of encrypted data while protecting patient privacy. These methods enable safe data sharing and cooperative calculations while enabling healthcare organisations to extract useful insights from private medical data without violating confidentiality. Privacy-preserving techniques will become more important as healthcare becomes more data-driven in terms of research and decision-making in order to guarantee the moral and proper use of patient data.

Model training

The preprocessed healthcare dataset is trained using the NeuroShield model through a supervised learning process. This is done by instructing the model to learn from labeled examples to identify patterns and relationships in the data. The dataset is divided into training and validation sets, commonly in a ratio of 80 − 20. The 80% of the data is used for training the model and the 20% for evaluating the model’s performance to avoid overfitting. While being trained, the model learns to optimize a specified loss function here being the cross-entropy loss. Cross-entropy is especially useful for classification tasks because it calculates the difference between the probability distribution predicted by the model and the real distribution of classes in the data. The model uses an optimization algorithm like Adam to learn the weights of the neural network. Adam (Adaptive Moment Estimation) is used for its capacity to dynamically adjust the learning rate while training, thus making it very efficient in converging to a loss minimum even with high-dimensional and complex data spaces. This cyclical process repeats itself until the model has reached a satisfactory accuracy in the training set, and where the validation set is utilized in order to gauge the generalizability of the model.

Hyperparameter tuning

In order to further improve the performance of the model, important hyperparameters are optimized. These include the learning rate, batch size, and the depth of the neural network. The learning rate, which is fixed at 0.001, determines how much the weights of the model are adjusted at each gradient descent step. The model can learn in low stages with low learning rates, which can lead to more accurate but slow convergence. The number of model processes before weight updates is controlled by a batch size of 64. When choosing the ideal batch size, the performance and computational cost of the model is balanced. The design of the model varies in the number of layers of CNN and LSTM networks according to the specific features of the dataset. For example, the LSTM component can use several LSTM layers to efficiently describe the temporary dependence in the data, while the CNN component can use several convolutional layers to detect complex spatial patterns. The grid discovery, a systematic approach that well examines a specified selection of hyperparameter settings to find the optimal combination, is used to perform hyperparameter adaptation. To optimize the model for maximum accuracy, accuracy and generality, the grid search evaluates the performance of the model at the prescribed verification for each combination and identifies the best performance configurations.

Novelty of the work

This task is unique in many ways, which includes new approaches for fundamental problems in healthcare data analytics. Its foundation is a neuroshield model, a ground-breaking architecture that mixes the cascade CNNS and LSTM network. The synergy increases the accuracy and interpretation of the analysis results by enabling the model to efficiently detects the cosmic and spatial pattern in health data. Additionally, design uses a wide range of safety measures, including ABAC policy and MFA for access control and AES for data encryption. An essential component of healthcare data management, this control preserved patients ensure the privacy and integrity of the patient information. Additionally, the application of differential privacy-based adaptation techniques separates this study by allowing machine learning model training on sensitive health data while maintaining individual privacy. This emphasis on privacy-conservation techniques is a sign of a further thinking approach to healthcare data analytics. In general, with the ability to enhance this framework widespread and problem-specific nature medical research and patient care, healthcare data contributes to a novel and remarkable contribution to the field of data analytics.

Explainable AI

To enhance interpretation for clinicians, research incorporates AI (XAI) methods, particularly the cursed additive explanation (SHAP) approach, which offers transparent, explanatory explanations in the process of determining neurosheild. The SHAP places emphasis on every feature by determining the contribution of single input to the predictions of the model, making deep teaching models more transparent. Integration is started with size value generation of every feature within dataset so that healthcare practitioners have an idea of how different characteristics of patients like medical history, lab results and imaging equipment influence the outcome She does These sizes are envisioned on size summary plots, dependence plots and force plots, which provide the cozy insight of convenience interaction and influence over predictions. Moreover, local explanations are offered to explain individual patient-level predictions so that doctors are able to verify and rely on the model’s suggestions prior to significant decision-making. With the inclusion of shape in the model, the neuroshield model is able to increase accountability such that healthcare workers are able to explain the AI-driven insight, linking predictions to clinical expertise. Moreover, the incorporation of XAI techniques assists in regulatory compliance by providing explainability in AI-Assured Healthcare decisions, meeting guidelines like GDPR and HIPAA. Although these advantages exist, challenges lie in ensuring computational efficiency when computing size values for large datasets and converting AI-produced explanation into actionable clinical insights. Ensuring these factors ensure that neuroshield is not only accurate and powerful, but also explanatory and reliable, encourages more and more advertisements.

link

![Average Cost of Medical School [2025]: Yearly + Total Costs Average Cost of Medical School [2025]: Yearly + Total Costs](https://educationdata.org/wp-content/uploads/2025/09/page-1.png)